Think of search engines as digital librarians that organize the entire internet’s content into neat, searchable categories. Every webpage gets evaluated, cataloged, and positioned based on relevance and quality. In this article, we explain the three-step process that determines your visibility.

Key Takeaways

- Search visibility follows a strict chain: crawl → index → rank—block crawling and you can’t rank.

- Crawl budget matters on larger sites; duplicates, errors, and messy URLs waste crawler attention.

- Indexing filters pages—thin, duplicate, noindex, or canonical conflicts can keep content out of results.

- Rankings depend on relevance + authority (links/E-E-A-T) + UX/technical performance (Core Web Vitals, mobile, HTTPS).

- Modern SERPs are shaped by AI + personalization + zero-click features, so snippet and schema optimization boost visibility.

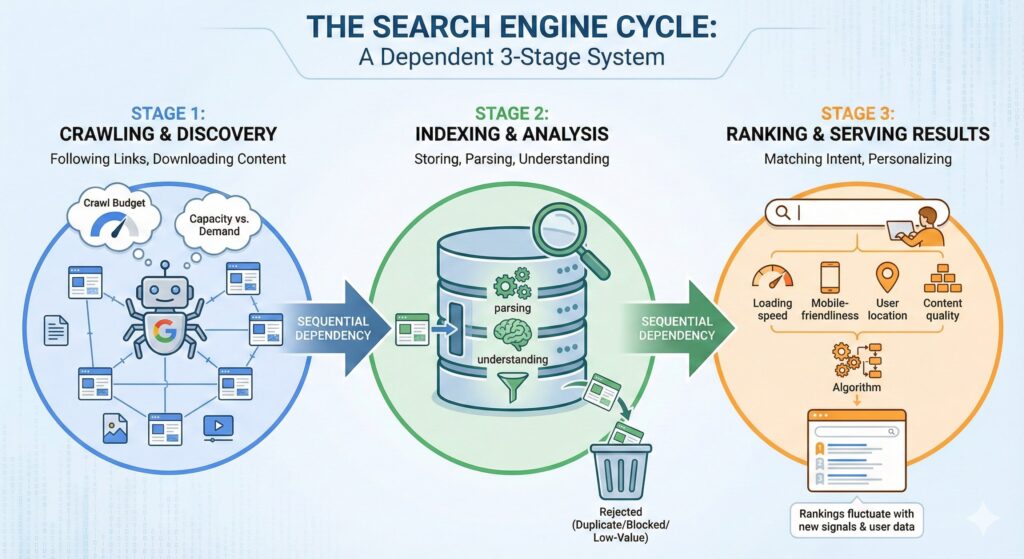

The Search Engine Cycle

Search engines operate through a dependent three-stage system where each phase builds on the previous one. If you block crawling, your content never reaches the indexing phase, which means zero ranking potential. This sequential dependency explains why technical SEO issues that prevent crawling can devastate organic revenue streams.

The process starts with discovery and ends with user-facing results, but each stage has specific requirements and limitations that affect your visibility.

Stage 1: Crawling and Discovery

Web crawlers like Googlebot systematically browse the internet by following links from page to page. These automated programs download text, images, videos, and other content while respecting crawl budget limitations. Crawl budget is the number of URLs Googlebot can and wants to crawl, shaped by your site’s crawl capacity and Google’s crawl demand for your URLs.

Sites with strong authority and frequent updates typically receive higher crawl budgets, while new or low-authority domains get limited crawler attention.

Stage 2: Indexing and Analysis

The search index functions as a massive database where Google stores and analyzes crawled content. Not every crawled page gets indexed – duplicate content, blocked pages, or low-value content often gets excluded. Google’s indexing systems parse content structure, identify key topics, and understand relationships between different pages.

This stage determines whether your content becomes eligible for search results.

Stage 3: Ranking and Serving Results

When users submit queries, search algorithms match their intent with indexed content using hundreds of ranking factors. The system considers query meaning, page relevance, content quality, loading speed, mobile-friendliness, and user location. Results get personalized based on search history, geographic location, and device type.

Rankings fluctuate constantly as algorithms process new signals and user behavior data.

How Googlebot Crawls the Web

Googlebot operates autonomously, deciding which pages to crawl and how frequently based on site signals and crawler efficiency. The bot discovers new content primarily through internal and external links, making link structure critical for discoverability. Sites that respond quickly and provide clear navigation paths typically receive more thorough crawling.

Server response times and technical errors directly impact how much content Googlebot can access during each crawl session.

Crawl Budget Optimization

Large sites often face crawl budget constraints where important pages get skipped if the crawler wastes time on low-value URLs. Common crawl budget drains include duplicate content, infinite scroll pages, and broken internal links. Proper URL structure and strategic use of robots.txt directives help prioritize valuable content.

Sites exceeding crawl budget limits may see delayed indexing of new content or updates.

Technical Crawling Barriers

Several technical issues can completely block Googlebot access, eliminating ranking potential:

- Incorrect robots.txt files that disallow important sections

- Server errors (500, 503) during crawler visits

- JavaScript-heavy sites without proper rendering support

- Password-protected or login-required pages

- Infinite redirect loops or broken URL structures

The Search Index: Content Storage and Analysis

Google’s search index contains hundreds of billions of web pages, each analyzed for content quality, topic relevance, and user value. The indexing process involves natural language processing, entity recognition, and semantic analysis to understand content meaning beyond simple keyword matching. Pages with clear structure, relevant headings, and comprehensive topic coverage typically perform better during indexing.

Content that duplicates existing indexed material or provides minimal user value often gets filtered out before reaching the ranking stage.

Index Inclusion Factors

Several elements determine whether crawled content gets added to the search index:

- Content uniqueness and originality compared to existing indexed pages

- Page loading speed and technical accessibility

- Clear content structure with proper heading hierarchy

- Sufficient content depth to answer user queries

- Mobile-friendly design and responsive layout

- Absence of noindex directives or canonical conflicts

Entity Recognition and Semantic Understanding

Modern indexing systems identify entities (people, places, concepts) within content and build relationships between related topics. This semantic analysis helps Google understand content context and match it with user intent more accurately. Pages that establish clear entity relationships and provide comprehensive topic coverage gain advantages in the indexing process.

Structured data markup can enhance entity recognition and improve indexing accuracy.

| Indexing Factor | Impact Level | Common Issues | Solutions |

|---|---|---|---|

| Content Quality | High | Thin or duplicate content | Create comprehensive, unique content |

| Technical Accessibility | High | Slow loading, mobile issues | Optimize Core Web Vitals, responsive design |

| Content Structure | Medium | Poor heading hierarchy | Use proper H1-H6 structure |

| Entity Signals | Medium | Unclear topic focus | Add structured data, clear entity mentions |

Search Algorithms and Ranking Factors

Google uses automated ranking systems that consider many factors and signals to rank results. These factors span content relevance, technical performance, user experience signals, and authority indicators like backlinks. The algorithm constantly evolves through machine learning and manual updates, with major changes potentially reshuffling rankings across entire industries.

Knowing core ranking factors helps prioritize SEO efforts for maximum impact.

Content Relevance Signals

Search algorithms analyze how well content matches user intent through keyword relevance, topic comprehensiveness, and semantic relationships. Pages that thoroughly cover topics while maintaining clear focus typically outrank shallow or off-topic content. Content freshness also plays a role, especially for time-sensitive queries or rapidly changing topics.

User engagement metrics like click-through rates and time spent on page provide additional relevance signals.

Authority and Trust Indicators

External validation through backlinks remains a crucial ranking factor, with links from authoritative, relevant sites carrying more weight. The E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) guides how algorithms assess content credibility. Sites demonstrating clear expertise, author credentials, and trustworthy information sources gain ranking advantages.

Domain age, consistent publishing, and positive user reviews contribute to overall authority scores.

Technical Performance Factors

Core Web Vitals focus on:

- Loading (LCP)

- Responsiveness (INP), and

- Visual stability (CLS)

Mobile-friendliness has become essential as mobile searches dominate traffic patterns. Secure HTTPS connections and clean URL structures also influence rankings, though typically as secondary factors.

Sites with persistent technical issues face ranking penalties regardless of content quality.

AI Integration in Modern Search

Artificial intelligence transforms how search engines understand queries, analyze content, and generate results through systems like Google’s RankBrain and BERT. These AI models excel at interpreting conversational queries, understanding context, and matching user intent with relevant content even when exact keywords don’t appear. Machine learning algorithms continuously refine ranking decisions based on user behavior patterns and content performance data.

AI-powered features like featured snippets and knowledge panels increasingly dominate search results pages.

Semantic Search Capabilities

Modern search algorithms understand relationships between concepts, synonyms, and related topics without requiring exact keyword matches. This semantic understanding allows search engines to surface relevant content even when users phrase queries differently than content creators. Content that establishes clear topic relationships and uses natural language variations performs better in semantic search environments.

Long-tail queries benefit significantly from semantic matching capabilities.

Personalization and User Context

Search results adapt based on individual user history, location, device type, and previous interactions with similar content. Personalization algorithms balance individual preferences with general relevance signals to create customized result sets. Local search queries receive heavy personalization based on geographic proximity and local business signals.

Understanding your audience’s search context helps optimize content for personalized results.

Zero-Click Search and Answer Engines

Featured snippets, knowledge panels, and other rich results increasingly provide answers directly in search results without requiring clicks to websites. This zero-click trend changes how users interact with search results and affects traffic distribution across websites. Content optimized for featured snippet capture can gain significant visibility even without top organic rankings.

Answer engine optimization becomes crucial as AI-powered search tools like ChatGPT and Microsoft Copilot reshape user expectations.

Optimizing for Featured Snippets

Featured snippets typically extract content that directly answers specific questions in concise, well-structured formats. Lists, tables, and step-by-step instructions perform particularly well for snippet capture. Content that anticipates common user questions and provides clear, authoritative answers gains featured snippet opportunities.

Digit Solutions helps businesses structure content for maximum snippet visibility while maintaining comprehensive topic coverage.

Structured Data for Rich Results

Schema markup enables search engines to understand content context and display enhanced results like review stars, event information, or product details. Properly implemented structured data increases click-through rates and provides competitive advantages in search results. Different content types benefit from specific schema types, from local business markup to article schema.

Rich results often receive higher user attention and improved click-through rates compared to standard organic listings.

Platforms That Help You Control the Crawl → Index → Rank Chain

If crawling is blocked, indexing becomes inconsistent, and rankings can’t compound—so the fastest wins usually come from tightening the technical path and then strengthening relevance + authority. These four platforms help you audit, monitor, and optimize the exact stages you covered (discovery, index eligibility, and ranking performance).

Image Source: Semrush

Semrush

Use it to spot crawl and technical SEO issues (site audit), then connect those fixes to what actually moves rankings (keyword gaps, competitor SERPs, and backlink signals). It’s especially useful for prioritizing which pages to improve first so crawl budget and authority get spent on the URLs that can realistically win.

Image Source: SE Ranking

SE Ranking

This is a practical “measure-and-improve” platform for the ranking stage: track keyword movement, monitor competitors, and validate whether your on-page + technical changes translate into better visibility. It also supports auditing so you can catch issues that prevent pages from becoming reliably indexable.

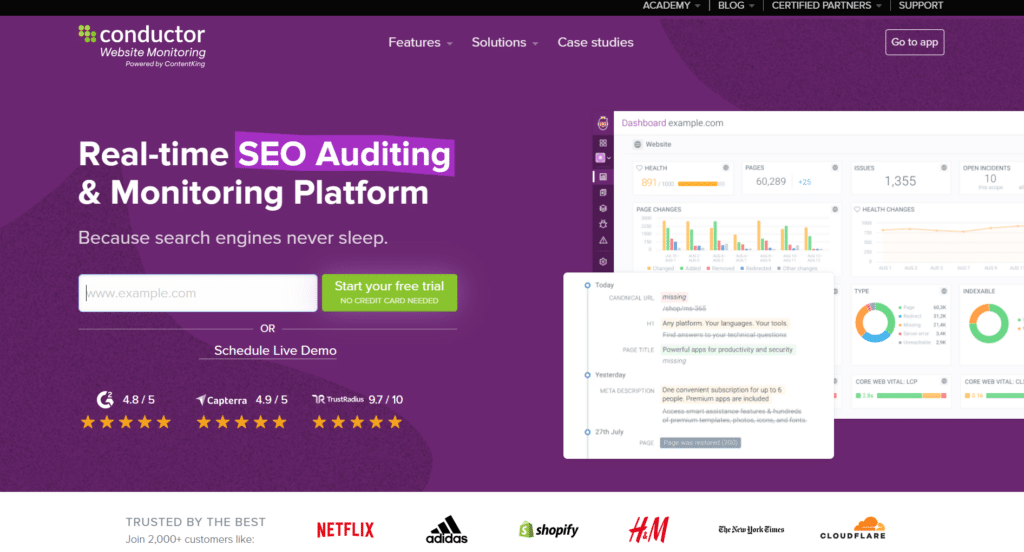

Image Source: Content King

Content King

Great for protecting the crawling and indexing stages over time—because it continuously watches for technical changes (broken links, unexpected noindex, page changes) that can quietly reduce index coverage. This helps keep your “search engine cycle” stable so rankings don’t drop due to preventable crawl/index problems.

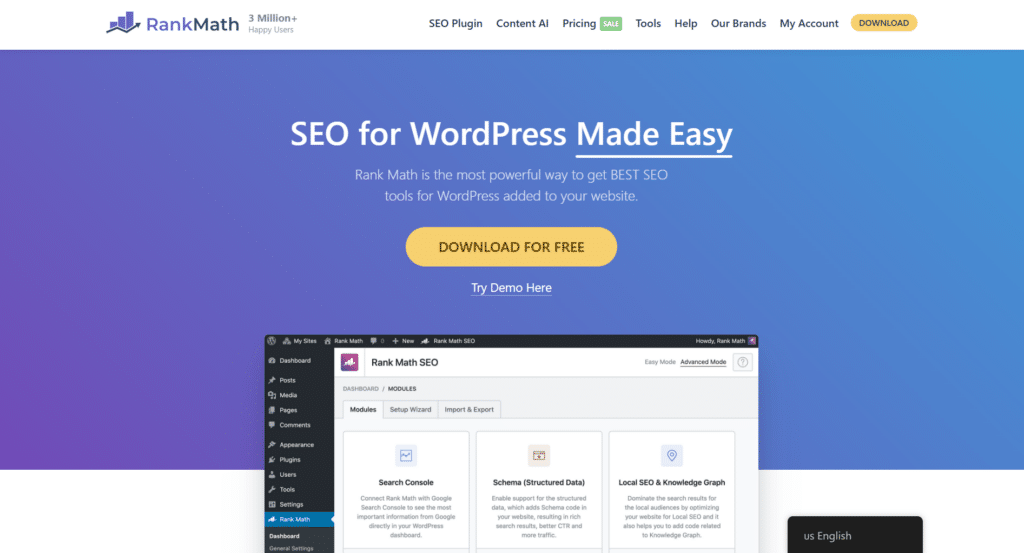

Image Source: Rank Math

Rank Math

On WordPress sites, it helps you implement clean on-page signals that support indexing and rich results (titles/meta, canonical handling, and structured data/schema). That directly ties into your snippet + schema section—making it easier for search engines to understand your content and display enhanced SERP features.

Conclusion

Search engines transform chaotic web content into organized, searchable results through systematic crawling, indexing, and ranking processes. Technical barriers that prevent crawling directly eliminate ranking potential and revenue opportunities. Modern algorithms prioritize user experience, content quality, and semantic relevance over traditional keyword optimization tactics.

If your pages aren’t getting crawled, indexed, and ranked consistently, you’re invisible—even with great content. Digit Solutions audits your crawl paths, index coverage, and technical blockers (robots.txt, redirects, JS rendering, Core Web Vitals), then builds an SEO + AEO roadmap that helps Google and answer engines surface your best pages first.

Get a free SEO audit and walk away with clear priorities: what to fix now, what to optimize next, and how to track crawl/index gains in Search Console.

FAQs

How Do Search Engines Work Step by Step?

Search engines:

- Discover pages by crawling links and sitemaps

- Render and understand content and code

- Store eligible pages in an index,

- Interpret a user’s query and intent

- Rank indexed pages using many relevance and quality signals, and

- Show results while continuously updating based on new crawls and performance data—an end-to-end process we validate in practice using tools like Google Search Console and industry crawlers.

What Are the Three Main Functions of a Search Engine?

The three core functions are crawling (finding pages), indexing (storing and organizing pages), and ranking (ordering results for a query). Strong SEO focuses on making each stage easy and reliable—technical accessibility for crawlers, clear structure for indexing, and intent-matched, trustworthy content for ranking.

How Do Search Engines Find and Index Web Pages?

They find pages by following links across the web, reading XML sitemaps, and revisiting known URLs; then they fetch and render the page, evaluate what it’s about (content, structure, metadata, internal links, and canonical signals), and decide whether to store it in the index. Clean site architecture, correct canonicals, and consistent internal linking help ensure important pages are indexed correctly.

How Do Search Engines Rank Results?

Ranking compares indexed pages against a query using signals like relevance to intent, content usefulness and depth, page experience and performance, internal and external authority signals, freshness when needed, and overall trust/credibility (often aligned with E-E-A-T). Sustainable ranking improvements come from aligning content to real queries, strengthening technical foundations, and building credible authority signals over time.

What Is Crawling, Indexing, And Ranking In SEO?

Crawling is when bots discover and fetch your pages, indexing is when search engines store and categorize those pages, and ranking is where they decide which indexed pages appear first for a search. SEO improves visibility by removing crawl barriers, ensuring the right pages get indexed, and earning stronger ranking signals through helpful content, technical quality, and trustworthy authority.