Site health serves as the foundation that prevents your content from being ignored by search engines and users alike, and technical SEO is what keeps that foundation stable. When technical issues plague your website, even the most compelling content becomes invisible to crawlers and frustrating for visitors. In this article, we diagnose the invisible errors costing you traffic and provide actionable solutions to restore your site’s technical foundation.

Key Takeaways

- Fix crawlability blockers like broken links, 404s, and redirect chains before you invest time in speed improvements.

- Core Web Vitals measure real user experience (load speed, responsiveness, and stability) and can factor into rankings, but they don’t outweigh relevance.

- Mobile-first indexing means your mobile experience heavily influences visibility, so responsive layouts and usable navigation are non-negotiable.

- HTTPS is a lightweight ranking signal and a major trust requirement, so secure every page and eliminate mixed content warnings.

- XML sitemaps, robots.txt, and a consistent audit cadence improve discovery, reduce crawl waste, and prevent technical regressions over time.

Crawlability First: Remove Navigation Barriers

Search engines encounter your website like visitors navigating a building with broken elevators and missing signs. Crawlability issues create immediate friction that prevents search bots from discovering and indexing your content properly. Fixing these navigation barriers takes priority over speed optimizations because uncrawlable pages generate zero organic traffic regardless of loading times.

Broken links represent the most common crawlability problem across websites. These dead-end paths frustrate users and waste crawler resources during site exploration.

1. Identify and Fix Broken Internal Links

Internal broken links occur when pages link to URLs that no longer exist on your domain. Use tools like Screaming Frog or Google Search Console to identify 404 errors within your site structure. Replace broken internal links with updated URLs or remove references to deleted pages entirely.

2. Address External Link Issues

External broken links point to removed or relocated content on other websites. While less critical than internal breaks, these still create poor user experiences. Check external links quarterly and update or remove outdated references to maintain site quality.

3. Resolve 404 Error Pages

404 errors signal missing content to search engines and users. Create a comprehensive 404 audit using Google Search Console’s Coverage report. Implement 301 redirects for important pages that moved or create custom 404 pages that guide users back to relevant content.

4. Fix Redirect Chains

Multiple redirects slow down crawlers and dilute page authority through redirect chains. Identify pages that redirect multiple times before reaching their final destination. Update all redirects to point directly to the final URL, eliminating unnecessary hops.

5. Optimize URL Structure

Clean URL structures help crawlers understand page hierarchy and content relationships. Remove unnecessary parameters, use descriptive slugs, and maintain consistent URL patterns across your site. Avoid dynamic URLs with excessive parameters when possible.

| Crawlability Issue | Detection Method | Fix Priority | Impact Level |

|---|---|---|---|

| Internal Broken Links | Screaming Frog Audit | High | Direct Ranking Loss |

| 404 Error Pages | Google Search Console | High | User Experience |

| Redirect Chains | HTTP Status Checker | Medium | Page Authority |

| External Broken Links | Manual Review | Low | Content Quality |

Core Web Vitals: Measure User Experience Impact

Core Web Vitals translate user experience into measurable metrics that directly influence search rankings. These performance indicators capture loading speed, visual stability, and interaction responsiveness across your website. Google uses these signals as ranking factors, making Core Web Vitals optimization essential for maintaining competitive search positions.

The three Core Web Vitals metrics each address specific user experience pain points during website interactions.

Largest Contentful Paint (LCP)

LCP measures loading performance by tracking when the largest content element becomes visible to users. Aim for LCP scores under 2.5 seconds for optimal user experience. Optimize images, reduce server response times, and eliminate render-blocking resources to improve LCP scores.

Interaction to Next Paint (INP)

INP measures responsiveness by evaluating how quickly your page responds visually after user interactions across the full visit. Target INP scores under 200 milliseconds for consistently responsive interactions. Reduce long JavaScript tasks, defer non-critical scripts, and keep the main thread free during interactions to improve responsiveness.

Cumulative Layout Shift (CLS)

CLS tracks visual stability by measuring unexpected layout movements during page loading. Maintain CLS scores under 0.1 to prevent content shifting that disrupts user reading. Reserve space for images and ads while avoiding dynamic content injection above existing elements.

Mobile-Friendliness: Optimize for Primary Traffic Source

Mobile devices drive a major share of web usage, making mobile-friendliness a critical ranking factor rather than an optional enhancement. Google’s mobile-first indexing means the search engine primarily uses your mobile site version for ranking decisions. Poor mobile experiences directly impact search visibility and user engagement across all device types.

Mobile optimization extends beyond responsive design to encompass touch navigation, loading performance, and content accessibility on smaller screens.

1. Implement Responsive Design

Responsive design automatically adjusts layout elements to fit different screen sizes and orientations. Use flexible grid systems, scalable images, and CSS media queries to create seamless experiences across devices. Test your responsive implementation on various screen sizes and resolutions.

2. Optimize Touch Navigation

Touch interfaces require larger tap targets and intuitive gesture support for effective mobile navigation. Ensure buttons and links measure at least 44×44 pixels for comfortable finger tapping. Space interactive elements adequately to prevent accidental clicks on adjacent items.

3. Improve Mobile Page Speed

Mobile users expect fast loading times despite potentially slower network connections. Optimize images for mobile bandwidth, minimize HTTP requests, and prioritize above-the-fold content loading. Consider AMP only if it fits your stack and publishing workflow; otherwise prioritize Core Web Vitals and mobile UX improvements that benefit every page.

4. Enhance Mobile Content Readability

Mobile screens require careful content formatting for optimal readability without zooming or horizontal scrolling. Use legible font sizes (minimum 16px), appropriate line spacing, and sufficient color contrast. Break long paragraphs into shorter sections for easier mobile consumption.

5. Test Mobile Functionality

Regular mobile testing identifies usability issues that desktop testing might miss. Use Google’s Mobile-Friendly Test tool and conduct manual testing on actual devices. Pay attention to form functionality, navigation menus, and content accessibility on mobile platforms.

SSL Certificate and HTTPS: Secure User Data

SSL certificates encrypt data transmission between browsers and servers, providing security signals that search engines prioritize in ranking algorithms. HTTPS protocol implementation protects user information while building trust indicators that influence both search visibility and conversion rates. Modern browsers display security warnings for non-HTTPS sites, creating immediate credibility concerns for visitors.

SSL implementation requires technical configuration but provides immediate security and SEO benefits across your entire website.

Certificate Installation Process

Obtain SSL certificates from trusted certificate authorities like Let’s Encrypt, DigiCert, or your hosting provider.

- Install certificates on your web server

- Configure HTTPS redirects from HTTP versions

- Enable HTTP/2 or HTTP/3 protocols to significantly improve load speeds through efficient data multiplexing

- Update internal links to use HTTPS URLs and verify certificate validity through browser security indicators.

Mixed Content Resolution

Mixed content occurs when HTTPS pages load HTTP resources, creating security vulnerabilities and browser warnings. Audit your site for HTTP images, scripts, and stylesheets loaded on HTTPS pages. Update all resource URLs to HTTPS versions or use protocol-relative URLs when appropriate.

XML Sitemap: Guide Search Engine Discovery

XML sitemaps provide search engines with comprehensive maps of your website’s content structure and update frequency. These files help crawlers discover pages efficiently and understand content relationships within your site architecture. Properly configured sitemaps help search engines discover and crawl your important pages more efficiently and understand your preferred URL set.

Sitemap optimization involves strategic page inclusion and regular maintenance to reflect current site structure accurately.

Sitemap Structure and Organization

Create separate sitemaps for different content types including pages, posts, images, and videos. Organize large sites using sitemap index files that reference individual category sitemaps. Include priority values and last modification dates to guide crawler behavior toward important content.

Submission and Monitoring

Submit XML sitemaps through Google Search Console and Bing Webmaster Tools for optimal discovery. Monitor sitemap processing reports to identify indexing issues or crawl errors. Update sitemaps automatically when publishing new content or restructuring site navigation.

Robots.txt: Control Crawler Access

Robots.txt files instruct search engine crawlers which pages and directories to access or avoid during site exploration. Strategic robots.txt configuration prevents crawlers from wasting resources on unimportant pages while ensuring critical content remains discoverable. Proper implementation improves crawl budget efficiency for valuable pages, but it does not secure sensitive content—use authentication or noindex controls for that.

Robots.txt management requires careful balance between access control and content discovery optimization.

Essential Robots.txt Directives

Block crawler access to admin areas, private directories, and duplicate content using disallow directives. Allow access to important content sections and specify sitemap locations within robots.txt files. Test robots.txt configuration using Google Search Console’s robots.txt testing tool before implementation.

Common Configuration Mistakes

Avoid blocking CSS and JavaScript files that affect page rendering and mobile-friendliness evaluation. Never use robots.txt to hide sensitive information since the file remains publicly accessible. Review robots.txt regularly to ensure directives align with current site structure and SEO goals.

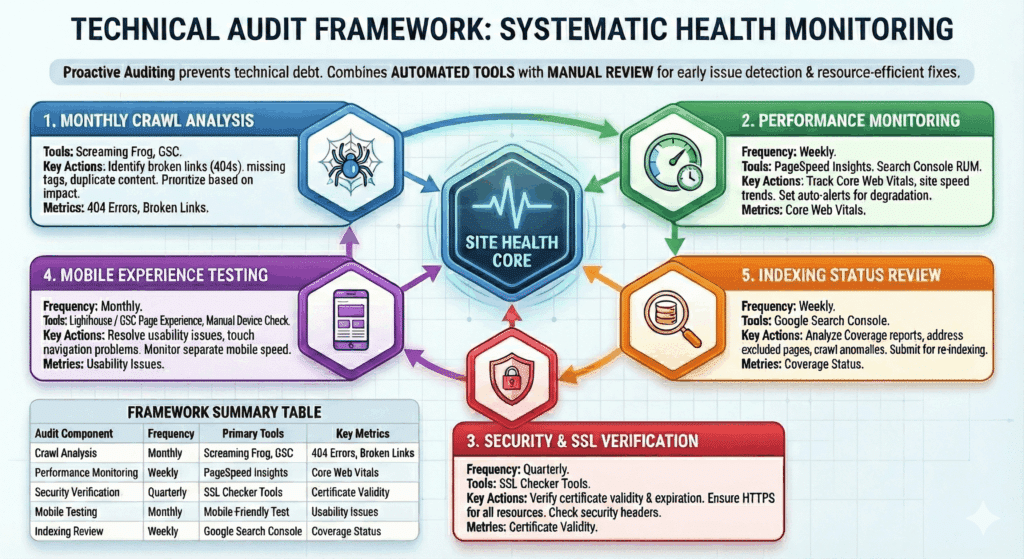

Technical Audit Framework: Systematic Health Monitoring

Regular technical audits identify emerging issues before they impact search rankings or user experience significantly. Systematic monitoring catches problems during early stages when fixes require minimal resources and technical intervention. Proactive auditing prevents small technical debt from accumulating into major site health obstacles that require extensive remediation efforts.

Effective audit frameworks combine automated tools with manual review processes to capture both obvious issues and subtle problems that impact site performance.

1. Monthly Crawl Analysis

Perform comprehensive site crawls using Screaming Frog or similar tools to identify broken links, missing meta tags, and duplicate content issues. Review crawl reports for new 404 errors, redirect problems, and page structure changes. Document findings and prioritize fixes based on traffic impact and user experience severity.

2. Performance Monitoring

Track Core Web Vitals scores using Google PageSpeed Insights and real user monitoring data from Search Console. Monitor site speed trends and identify pages with declining performance metrics. Set up automated alerts for significant performance degradation or Core Web Vitals threshold violations.

3. Security and SSL Verification

Verify SSL certificate validity and expiration dates to prevent security warnings and ranking penalties. Check for mixed content issues and ensure all resources load securely over HTTPS connections. Monitor security headers and implement additional protections like Content Security Policy when appropriate.

4. Mobile Experience Testing

Conduct regular mobile usability testing using Google’s Mobile-Friendly Test and manual device testing. Review mobile-specific issues reported in Search Console and address touch navigation problems. Monitor mobile page speed separately from desktop performance metrics.

5. Indexing Status Review

Analyze Google Search Console coverage reports to identify indexing problems and excluded pages. Review submitted URLs versus indexed pages to understand crawler behavior patterns. Address crawl anomalies and submit important pages for re-indexing when necessary.

| Audit Component | Frequency | Primary Tools | Key Metrics |

|---|---|---|---|

| Crawl Analysis | Monthly | Screaming Frog, GSC | 404 Errors, Broken Links |

| Performance Monitoring | Weekly | PageSpeed Insights | Core Web Vitals |

| Security Verification | Quarterly | SSL Checker Tools | Certificate Validity |

| Mobile Testing | Monthly | Mobile-Friendly Test | Usability Issues |

| Indexing Review | Weekly | Google Search Console | Coverage Status |

At Digit Solutions, our technical audit frameworks help businesses maintain healthy sites that support long-term organic growth. Our systematic approach identifies issues before they impact rankings while providing clear remediation roadmaps that align with your business goals and technical resources.

4 Platforms That Help You Execute (and Maintain) Technical SEO Fixes

Once you’ve identified crawlability blockers, Core Web Vitals gaps, mobile UX issues, and indexing friction, the fastest way to turn the audit into results is to use tools built for technical diagnosis and ongoing monitoring. The platforms below map directly to the workflows in your guide—so fixes don’t get missed, and regressions don’t creep back in.

Image Source: Semrush

Semrush

Semrush’s Site Audit-style workflows are ideal for uncovering crawlability problems like broken links, 404s, redirect chains, and messy URL patterns—exactly the “fix crawlability first” priorities in your guide. It also helps you track technical issue trends over time so your audit cadence becomes repeatable instead of a one-off cleanup.

Image Source: SE Ranking

SE Ranking

SE Ranking supports structured technical auditing so you can systematically find and prioritize indexability and crawl issues (errors, redirects, duplicate/parameterized URLs) before moving into performance work. It’s also useful for keeping technical health checks consistent—matching your framework approach of monitoring, validating, and preventing regressions.

Image Source: Sitechecker

Sitechecker

Sitechecker is a practical fit for ongoing site health monitoring, helping you catch new technical issues early—like fresh 404s, redirect changes, or crawl barriers introduced during updates. This aligns with your “systematic health monitoring” section by turning audits into continuous visibility instead of periodic surprises.

Image Source: NitroPack

NitroPack

NitroPack is built to improve real-world speed and stability signals that feed Core Web Vitals (like LCP and CLS), which supports your performance and UX sections after crawlability is handled. It’s especially helpful when you need quicker wins on page performance without rebuilding your entire front-end stack.

Conclusion

Site health optimization removes friction between your content and search engine discovery while creating seamless user experiences. Focus on crawlability fixes first, then optimize Core Web Vitals and mobile performance for sustained organic growth. Regular technical audits prevent small issues from becoming major ranking obstacles.

Digit Solutions specializes in technical SEO audits and structured content systems that fix site health issues at their core. Our data-driven approach identifies exactly what’s blocking your organic growth and provides clear implementation steps. Get started with a comprehensive technical audit today.

FAQs

What Is Technical SEO?

Technical SEO is the work that helps search engines discover, crawl, render, and index your site reliably—while improving speed, structure, and overall usability. It focuses on the behind-the-scenes foundations (like site architecture, performance, and crawlability) that make your content easier to rank and your site easier to use.

What Are the Main Components of Technical SEO?

The main components include crawlability and indexability (robots.txt, sitemaps, canonical tags), site architecture and internal linking, page speed and Core Web Vitals, mobile friendliness, structured data (schema), HTTPS and security, duplicate content control, and clean status codes/redirects. At Digit Solutions, we prioritize the items that directly impact visibility and conversions—then validate improvements in Search Console and third-party tools.

How Do I Do a Technical SEO Audit?

Start by checking Google Search Console for indexing, coverage, and Core Web Vitals issues, then crawl your site with a tool like Semrush or Ahrefs to find broken links, redirect chains, duplicate pages, missing canonicals, and thin indexable URLs. Next, review performance (LCP/INP/CLS), mobile rendering, sitemap/robots setup, internal linking depth, and structured data. The final step is turning findings into a prioritized plan based on impact and effort—how we keep audits actionable instead of overwhelming.

What Is the Difference Between Technical SEO and On-Page SEO?

Technical SEO ensures your site can be crawled, indexed, and performs well; on-page SEO focuses on what’s on each page—content quality, keyword targeting, headings, internal links, and metadata. They work together: strong content won’t perform if the site has crawl or speed issues, and perfect technical health won’t help if the pages don’t match search intent.

How Much Does Technical SEO Cost?

Costs vary based on site size, platform complexity, and how many issues require developer support. Many businesses invest anywhere from a few hundred dollars for a small one-time review to several thousand per month for ongoing technical SEO and implementation. We typically scope pricing around what will move the needle fastest—pairing a clear audit with a prioritized roadmap and measurable outcomes.